It was one year ago that I first blogged about the failure rates of specific models of hard drives, so now is a good time for an update.

At Backblaze, as of December 31, 2014, we had 41,213 disk drives spinning in our data center, storing all of the data for our unlimited backup service. That is up from 27,134 at the end of 2013. This year, most of the new drives are 4TB drives, and a few are the new 6TB drives.

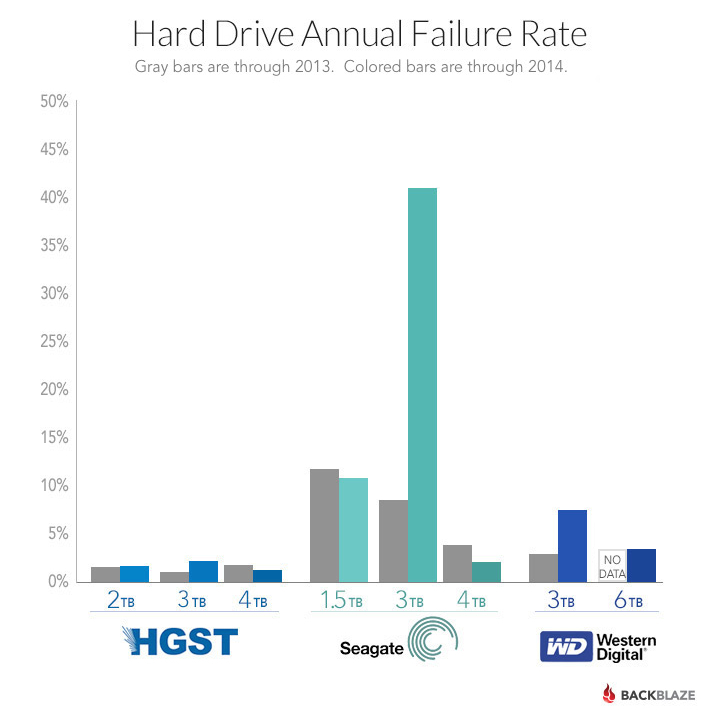

Hard Drive Failure Rates for 2014

Let’s get right to the heart of the post. The table below shows the annual failure rate through the year 2014. Only models where we have 45 or more drives are shown. I chose 45 because that’s the number of drives in a Backblaze Storage Pod and it’s usually enough drives to start getting a meaningful failure rate if they’ve been running for a while.

| Backblaze Hard Drive Failure Rates Through December 31, 2014 | |||||

|---|---|---|---|---|---|

| Name/Model | Size | Number of Drives |

Average Age in years |

Annual Failure Rate |

95% Confidence Interval |

| HGST Deskstar 7K2000 (HDS722020ALA330) |

2TB | 4,641 | 3.9 | 1.1% | 0.8% – 1.4% |

| HGST Deskstar 5K3000 (HDS5C3030ALA630) |

3TB | 4,595 | 2.6 | 0.6% | 0.4% – 0.9% |

| HGST Deskstar 7K3000 (HDS723030ALA640) |

3TB | 1,016 | 3.1 | 2.3% | 1.4% – 3.4% |

| HGST Deskstar 5K4000 (HDS5C4040ALE630) |

4TB | 2,598 | 1.8 | 0.9% | 0.6% – 1.4% |

| HGST Megascale 4000 (HGST HMS5C4040ALE640) |

4TB | 6,949 | 0.4 | 1.4% | 1.0% – 2.0% |

| HGST Megascale 4000.B (HGST HMS5C4040BLE640) |

4TB | 3,103 | 0.7 | 0.5% | 0.2% – 1.0% |

| Seagate Barracuda 7200.11 (ST31500341AS) |

1.5TB | 306 | 4.7 | 23.5% | 18.9% – 28.9% |

| Seagate Barracuda LP (ST31500541AS) |

1.5TB | 1,505 | 4.9 | 9.5% | 8.1% – 11.1% |

| Seagate Barracuda 7200.14 (ST3000DM001) |

3TB | 1,163 | 2.2 | 43.1% | 40.8% – 45.4% |

| Seagate Barracuda XT (ST33000651AS) |

3TB | 279 | 2.9 | 4.8% | 2.6% – 8.0% |

| Seagate Barracuda XT (ST4000DX000) |

4TB | 177 | 1.7 | 1.1% | 0.1% – 4.1% |

| Seagate Desktop HDD.15 (ST4000DM000) |

4TB | 12,098 | 0.9 | 2.6% | 2.3% – 2.9% |

| Seagate 6TB SATA 3.5 (ST6000DX000) |

6TB | 45 | 0.4 | 0.0% | 0.0% – 21.1% |

| Toshiba DT01ACA Series (TOSHIBA DT01ACA300) |

3TB | 47 | 1.7 | 3.7% | 0.4% – 13.3% |

| Western Digital Red 3TB (WDC WD30EFRX) |

3TB | 859 | 0.9 | 6.9% | 5.0% – 9.3% |

| Western Digital 4TB (WDC WD40EFRX) |

4TB | 45 | 0.8 | 0.0% | 0.0% – 10.0% |

| Western Digital Red 6TB (WDC WD60EFRX) |

6TB | 270 | 0.1 | 3.1% | 0.1% – 17.1% |

Notes:

- The total number of drives in this chart is 39,696. As noted, we removed from this chart any model of which we had less than 45 drives in service as of December 31, 2014. We also removed Storage Pod boot drives. When these are added back in we have 41,213 spinning drives.

- Some of the HGST drives listed were manufactured under their previous brand, Hitachi. We’ve been asked to use the HGST name and we have honored that request.

What Is a Drive Failure for Backblaze?

A drive is recorded as failed when we remove it from a Storage Pod for one or more of the following reasons:

- The drive will not spin up or connect to the OS.

- The drive will not sync, or stay synced, in a RAID Array.

- The SMART stats we use show values above our thresholds.

Sometimes we’ll remove all of the drives in a Storage Pod after the data has been copied to other (usually higher-capacity) drives. This is called a migration. Some of the older Pods with 1.5TB drives have been migrated to 4TB drives. In general, migrated drives don’t count as failures because the drives that were removed are still working fine and were returned to inventory to use as spares.

This past year, there were several Pods where we replaced all the drives because the RAID storage was getting unstable, and we wanted to keep the data safe. After removing the drives, we ran each of them through a third-party drive tester. The tester takes about 20 minutes to check the drive; it doesn’t read or write the entire drive. Drives that failed this test were counted as failed and removed from service.

Takeaways: What Are the Best Hard Drives?

4TB Drives Are Great.

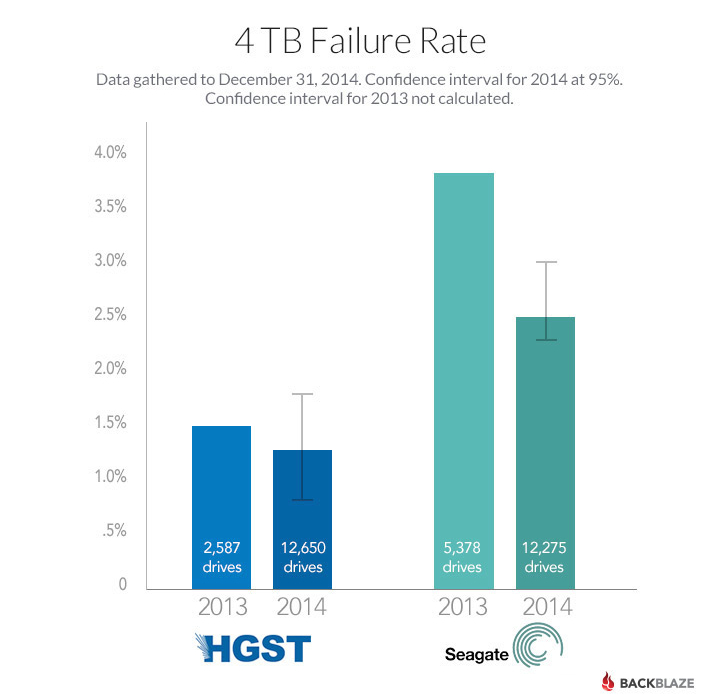

We like every one of the 4TB drives we bought this year. For the price, you get a lot of storage, and the drive failure rates have been really low. The Seagate Desktop HDD.15 has had the best price, and we have a LOT of them. Over 12 thousand of them. The failure rate is a nice low 2.6% per year. Low price and reliability is good for business.

The HGST drives, while priced a little higher, have an even lower failure rate, at 1.4% 1.0% (for all HGST 4TB models). It’s not enough of a difference to be a big factor in our purchasing, but when there’s a good price, we grab some. We have over 12 thousand of these drives.

Where are the WD 4TB drives?

There is only one Storage Pod of Western Digital 4TB drives. Why? The reason is simple: price. We purchase drives through various channel partners for each manufacturer. We’ll put out an RFQ (Request for Quote) for say 2,000 4TB drives, and list the brands and models we have validated for use in our Storage Pods. Over the course of the last year, Western Digital drives were often not quoted and when they were, they were never the lowest price. Generally the WD drives were $15-$20 more per drive. That’s too much of a premium to pay when the Seagate and HGST drives are performing so well.

3TB Drives Are Not So Great.

The HGST Deskstar 5K3000 3TB drives have proven to be very reliable, but expensive relative to other models (including similar 4TB drives by HGST). The Western Digital Red 3TB drives’ annual failure rate of 7.6% is a bit high but acceptable. The Seagate Barracuda 7200.14 3TB drives are another story. We’ll cover how we handled their failure rates in a future blog post.

Confidence in Seagate 4TB Drives

You might ask why we think the 4TB Seagate drives we have now will fare better than the 3TB Seagate drives we bought a couple years ago. We wondered the same thing. When the 3TB drives were new and in their first year of service, their annual failure rate was 9.3%. The 4TB drives, in their first year of service, are showing a failure rate of only 2.6%. I’m quite optimistic that the 4TB drives will continue to do better over time.

6TB Drives and Beyond: Not Sure Yet

We’re beginning the transition from using 4TB to using 6TB drives. Currently we have 270 of the Western Digital Red 6TB drives. The failure rate is 3.1%, but there have been only three failures. The statistics give a 95% confidence that the failure rate is somewhere between 0.1% and 17.1%. We need to run the drives longer, and see more failures, before we can get a better number.

We have just 45 of the Seagate 6TB SATA 3.5 drives, although more are on order. They’ve only been running a few months, and none have failed so far. When we have more drives, and some have failed, we can start to compute failure rates.

Which Hard Drive Should I Buy?

All hard drives will eventually fail, but based on our environment, if you are looking for good drive at a good value, it’s hard to beat the current crop of 4TB drives from HGST and Seagate. As we get more data on the 6TB drives, we’ll let you know.

What About the Hard Drive Reliability Data?

We will publish the data underlying this study in the next couple of weeks. There are over 12 million records covering 2014, which were used to produce the failure data in this blog post. There are over five million records from 2013. Along with the data, I’ll explain step by step how to compute an annual failure rate.