It’s early 2008, the concept of cloud computing is only just breaking into public awareness, and the economy is in the tank. Despite this less-than-kind business environment, five intrepid Silicon Valley veterans quit their jobs and pooled together $75K to launch a company with a simple goal: provide an easy-to-use, cloud-based backup service at a price that no one in the market could beat — $5 per month per computer.

The only problem: both hosted storage (through existing cloud services) and purchased hardware (buying servers from Dell or Microsoft) were too expensive to hit this price point. Enter Tim Nufire, aka: The Podfather.

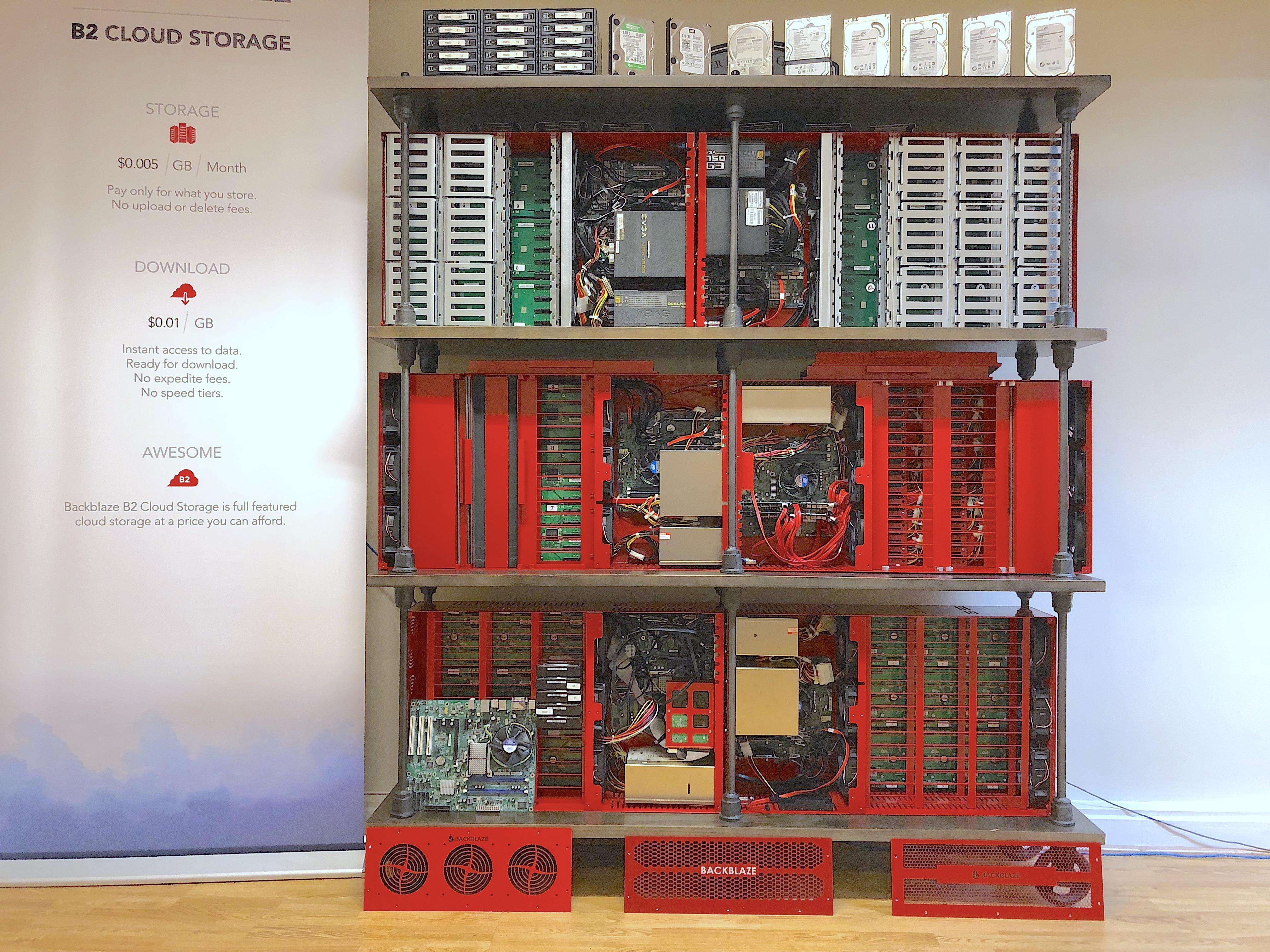

Tim led the effort to build what we at Backblaze call the Storage Pod: The physical hardware our company has relied on for data storage for more than a decade. On the occasion of the decade anniversary of the open sourcing of our Storage Pod 1.0 design, we sat down with Tim to relive the twists and turns that led from a crew of backup enthusiasts in an apartment in Palo Alto to a company with four data centers spread across the world holding 2100 storage pods and closing in on an exabyte of storage.

✣ ✣ ✣

Editors: So Tim, it all started with the $5 price point. I know we did market research and that was the price at which most people shrugged and said they’d pay for backup. But it was so audacious! The tech didn’t exist to offer that price. Why do you start there?

Tim Nufire: It was the pricing given to us by the competitors, they didn’t give us a lot of choice. But it was never a challenge of if we should do it, but how we would do it. I had been managing my own backups for my entire career; I cared about backups. So it’s not like backup was new, or particularly hard. I mean, I firmly believe Brian Wilson’s (Backblaze’s Chief Technology Officer) top line: You read a byte, you write a byte. You can read the byte more gently than other services so as to not impact the system someone is working on. You might be able to read a byte a little faster. But at the end of the day, it’s an execution game not a technology game. We simply had to out execute the competition.

E: Easy to say now, with a company of 113 employees and more than a decade of success behind us. But at that time, you were five guys crammed into a Palo Alto apartment with no funding and barely any budget and the competition — Dell, HP, Amazon, Google, and Microsoft — they were huge! How do you approach that?

TN: We always knew we could do it for less. We knew that the math worked. We knew what the cost of a 1 TB hard drive was, so we knew how much it should cost to store data. We knew what those markups were. We knew, looking at a Dell 2900, how much the margin was in that box. We knew they were overcharging. At that time, I could not build a desktop computer for less than Dell could build it. But I could build a server at half their cost.

I don’t think Dell or anyone else was being irrational. As long as they have customers willing to pay their hard margins, they can’t adjust for the potential market. They have to get to the point where they have no choice. We didn’t have that luxury.

So, at the beginning, we were reluctant hardware manufacturers. We were manufacturing because we couldn’t afford to pay what people were charging, not because we had any passion for hardware design.

E: Okay, so you came on at that point to build a cloud. Is that where your title comes from? Chief Cloud Officer? The pods were a little ways down the road, so Podfather couldn’t have been your name yet. …

TN: This was something like December, 2007. Gleb (Budman, the Chief Executive Officer of Backblaze) and I went snowboarding up in Tahoe, and he talked me into joining the team. … My title at first was all wrong, I never became the VP of Engineering, in any sense of the word. That was never who I was. I held the title for maybe five years, six years before we finally changed it. Chief Cloud Officer means nothing, but it fits better than anything else.

E: It does! You built the cloud for Backblaze with the Storage Pod as your water molecule (if we’re going to beat the cloud metaphor to death). But how does it all begin? Take us back to that moment: the podception.

TN: Well, the first pod, per se, was just a bunch of USB drives strapped to a shelf in the data center attached to two Dell 2900 towers. It didn’t last more than an hour in production. As soon as it got hit with load, it just collapsed. Seriously! We went live on this and it lasted an hour. It was a complete meltdown.

Two things happened: The bus was completely unstable, so the USB drives were unstable. Second, the DRDB (Distributed Replicated Block Device) — which is designed to protect your data by live mirroring it between the two towers — immediately fell apart. You implement a DRDB not because it works in a well-running situation, but because it covers you in the failure mode. And in failure mode it just unraveled — in an hour. It went into a split-brain mode under the hardware failures that the USB drives were causing. A well-running DRDB is fully mirrored, and split-brained mode is when the two sides simply give up and start acting autonomously because they don’t know what the other side is doing and they’re not sure who is boss. The data is essentially inconsistent at that point because you can choose A or B but the two sides are not in agreement.

While the USB specs say you can connect something like 256 or 128 drives to a hub, we were never able to do more than like, five. After something like five or six, the drives just start dropping out. We never really figured it out because we abandoned the approach. I just took the drives out and shoved them inside of the Dells, and those two became pods number 0 and 1. The Dells had room for 10 or 8 drives apiece, and so we brought that system live.

That was what the first six years of this company was like, just a never-ending stream of those kind of moments — mostly not panic inducing, mostly just: you put your head down and you start working through the problems. There’s a little bit of adrenaline, that feeling before a big race of an impending moment. But you have to just keep going.

E: Wait, so this wasn’t in testing? You were running this live?

TN: Totally! We were in friends-and-family beta at the time. But the software was all written. We didn’t have a lot of customers, but we had launched, and we managed to recover the files: whatever was backed up. The system has always had self healing built into the client.

E: So where do you go from there? What’s the next step?

TN: These were the early days. We were terrified of any commitments. So I think we had leased a half cabinet at the 365 Main facility in San Francisco, because that was the most we could imagine committing to in a contract: We committed to a year’s worth of this tiny little space.

We had those first two pods — the two Dell Towers (0 and 1) — which we eventually built out using external exclosures. So those guys had 40 or 45 drives by the end, with these little black boxes attached to them.

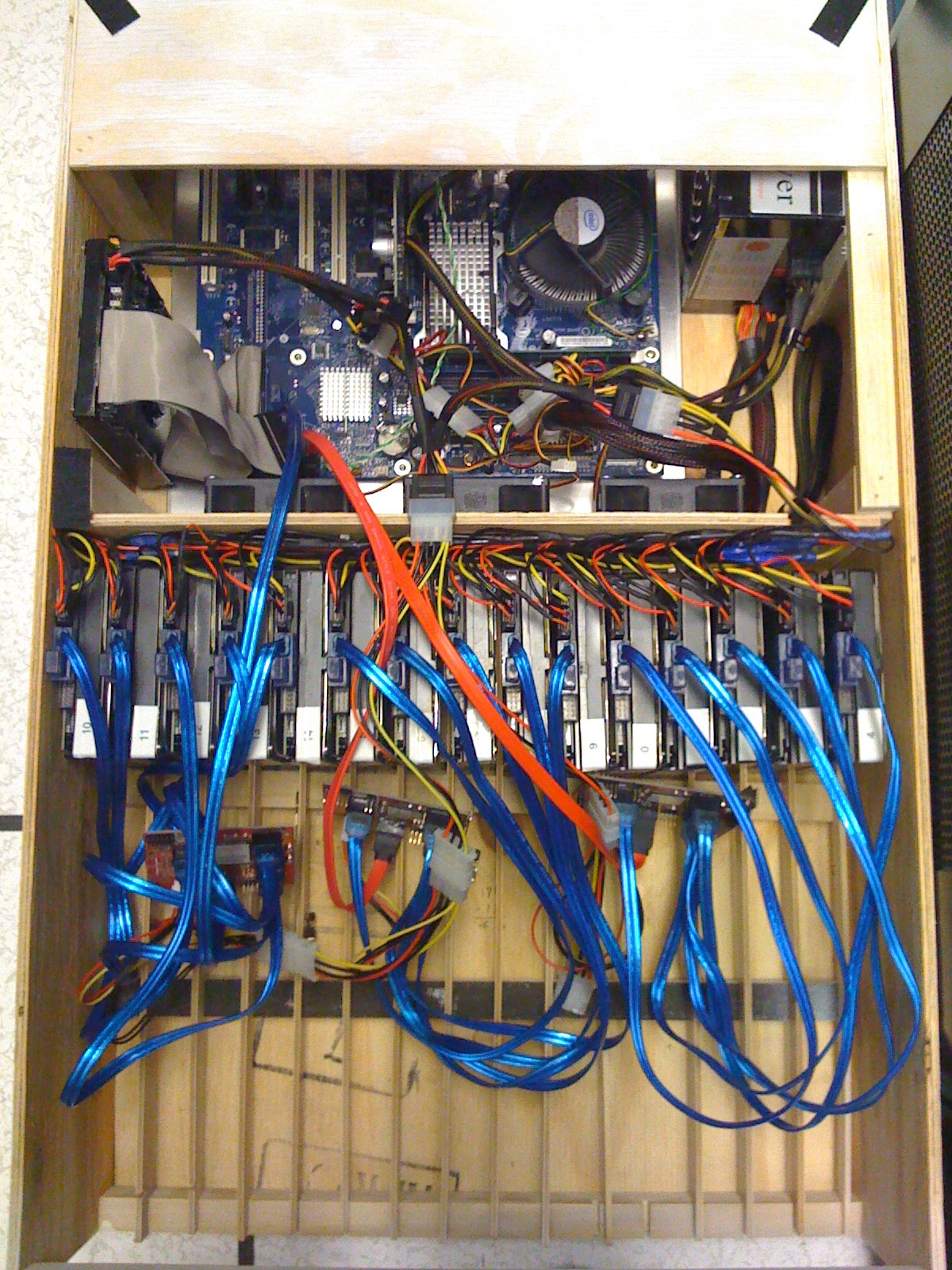

Pod number 2 was the plywood pod, which was another moment of sitting in the data center with a piece of hardware that just didn’t work out of the gate. This was Chris Robertson’s prototype. I credit him with the shape of the basic pod design, because he’s the one that came up with the top loaded 45 drives design. He mocked it up in his home woodshop (also known as a garage).

E: Wood in a data center? Come on, that’s crazy, right?

TN: It was what we had! We didn’t have a metal shop in our garage, we had a woodshop in our garage, so we built a prototype out of plywood, painted it white, and brought it to the data center. But when I went to deploy the system, I ended up having to recable and rewire and reconfigure it on the fly, sitting there on the floor of the data center, kinda similar to the first day.

The plywood pod was originally designed to be 45 drives, top loaded with port multipliers — we didn’t have backplanes. The port multipliers were these little cards that took one set of cables in and five cables out. They were cabled from the top. That design never worked. So what actually got launched was a fifteen drive system that had these little five drive enclosures that we shoved into the face of the plywood pod. It came up as a 15 drive, traditionally front-mounted design with no port multipliers. Nothing fancy there. Those boxes literally have five SATA connections on the back, just a one-to-one cabling.

E: What happened to the plywood pod? Clearly it’s cast in bronze somewhere, right?

TN: That got thrown out in the trash in Palo Alto. I still defend the decision. We were in a small one-bedroom apartment in Palo Alto and all this was cruft.

E: Brutal! But I feel like this is indicative of how you were working. There was no looking back.

TN: We didn’t have time to ask the question of whether this was going to work. We just stayed ahead of the problems: Pods 0 and 1 continued to run, pod 2 came up as a 15 drive chassis, and runs.

The next three pods are the first where we worked with Protocase. These are the first run of metal — the ones where we forgot a hole for the power button, so you’ll see the pried open spots where we forced the button in. These are also the first three with the port-multiplier backplane. So we built a chassis around that, and we had horrible drive instability.

We were using the Western Digital Green, 1 TB drives. But we couldn’t keep them in the RAID. We wrote these little scripts so that in the middle of the night, every time a drive dropped out of the array, the script would put it back in. It was this constant motion and churn creating a very unstable system.

We suspected the problem was with power. So we made the octopus pod. We drilled holes in the bottom, and ran it off of three PSUs beneath it. We thought: “If we don’t have enough power, we’ll just hit it with a hammer.” Same thing on cooling: “What if it’s getting too hot?” So we put a box fan on top and blew a lot of air into it. We were just trying to figure out what it was that was causing trouble and grief. Interestingly, the array in the plywood pod was stable, but when we replaced the enclosure with steel, it became unstable as well!

We slowly circled in on vibration as the problem. That plywood pod had actual disk enclosure with caddies and good locking mechanisms, so we thought the lack of caddies and locking mechanisms could be the issue. I was working with Western Digital at the time, too, and they were telling me that they also suspected vibration as the culprit. And I kept telling them, ‘They are hard drives! They should work!’

At the time, Western Digital was pushing me to buy enterprise drives, and they finally just gave me a round of enterprise drives. They were worse than the consumer drives! So they came over to the office to pick up the drives because they had accelerometers and lot of other stuff to give us data on what was wrong, and we never heard from them again.

We learned later that, when they showed up in an office in a one bedroom apartment in Palo Alto with five guys and a dog, they decided that we weren’t serious. It was hard to get a call back from them after that … I’ll admit, I was probably very hard to deal with at the time. I was this ignorant wannabe hardware engineer on the phone yelling at them about their hard drives. In hindsight, they were right; the chassis needed work.

But I just didn’t believe that vibration was the problem. It’s just 45 drives in a chassis. I mean, I have a vibration app on my phone, and I stuck the phone on the chassis and there’s vibration, but it’s not like we’re trying to run this inside a race car doing multiple Gs around corners, it was a metal box on a desk with hard drives spinning at 5400 or 7200 rpm. This was not a seismic shake table!

The early hard drives were secured with EPDM rubber bands. It turns out that real rubber (latex) turns into powder in about two months in a chassis, probably from the heat. We discovered this very quickly after buying rubber bands at Staples that just completely disintegrated. We eventually got better bands, but they never really worked. The hope was that they would secure a hard drive so it couldn’t vibrate its neighbors, and yet we were still seeing drives dropping out.

At some point we started using clamp down lids. We came to understand that we weren’t trying to isolate vibration between the drives, but we were actually trying to mechanically hold the drives in place. It was less about vibration isolation, which is what I thought the rubber was going to do, and more about stabilizing the SATA connector on the backend, as in: You don’t want the drive moving around in the SATA connector. We were also getting early reports from Seagate at the time. They took our chassis and did vibration analysis and, over time, we got better and better at stabilizing the drives.

We started to notice something else at this time: The Western Digital drives had these model numbers followed by extension numbers. We realized that drives that stayed in the array tended to have the same set of extensions. We began to suspect that those extensions were manufacturing codes, something to do with which backend factory they were built in. So there were subtle differences in manufacturing processes that dictated whether the drives were tolerant of vibration or not. Central Computer was our dominant source of hard drives at the time, and so we were very aggressively trying to get specific runs of hard drives. We only wanted drives with a certain extension. This was before the Thailand drive crisis, before we had a real sense of what the supply chain looked like. At that point we just knew some drives were better than others.

E: So you were iterating with inconsistent drives? Wasn’t that insanely frustrating?

TN: No, just gave me a few more gray hairs. I didn’t really have time to dwell on it. We didn’t have a choice of whether or not to grow the storage pod. The only path was forward. There was no plan B. Our data was growing and we needed the pods to hold it. There was never a moment where everything was solved, it was a constant stream of working on whatever the problem was. It was just a string of problems to be solved, just “wheels on the bus.” If the wheels fall off, put them back on and keep driving.

E: So what did the next set of wheels look like then?

TN: We went ahead with a second small run of steel pods. These had a single Zippy power supply, with the boot drive hanging over the motherboard. This design worked until we went to 1.5TB drives and the chassis would not boot. Clearly a power issue, so Brian Wilson and I sat there and stared at the non-functioning chassis trying to figure out how to get more power in.

The issue with power was not that we were running out of power on the 12V rail. The 5V rail was the issue. All the high end, high-power PSUs give you more and more power on 12V because that’s what the gamers need — it’s what their CPUs and the graphics card need, so you can get a 1000W or a 1500W power supply and it gives you a ton of power on 12V, but still only 25 amps on 5V. As a result, it’s really hard to get more power on the 5V rail, and a hard drive takes 12V and 5V: 12V to spin the motor and 5V to power the circuit board. We were running out of the 5V.

So our solution was two power supplies, and Brian and I were sitting there trying to visually imagine where you could put another power supply. Where are you gonna put it? We can put it were the boot drive is, and move the boot drive to the side, and just kind of hang the PSU up and over the motherboard. But the biggest consequence with this was, again, vibration. Mounting the boot drive to the side of a vibrating chassis isn’t the best place for a boot drive. So we had higher than normal boot drive failures in those nine.

So the next generation, after pod number 8, was the beginning of Storage Pod 1.0. We were still using rubber bands, but it had two power supplies, 45 drives, and we built 20 of them, total. Casey Jones, as our designer, also weighed in at this point to establish how they would look. He developed the faceplate design and doubled down on the deeper shade of red. But all of this was expensive and scary for us: We’re gonna spend $10 grand!? We don’t have much money. We had been two years without salary at this point.

We talked to Ken Raab from Sonic Manufacturing, and he convinced us that he could build our chassis, all in, for less than we were paying. He would take the task off my plate, I wouldn’t have to build the chassis, and he would build the whole thing for less than I would spend on parts … and it worked. He had better backend supplier connections, so he could shave a little expense off of everything and was able to mark up 20%.

We fixed the technology and the human processes. On the technology side, we were figuring out the hardware and hard drives, we were getting more and more stable. Which was required. We couldn’t have the same failure rates we were having on the first three pods. In order to reduce (or at least maintain) the total number of problems per day, you have to reduce the number of problems per chassis, because there’s 32 of them now.

We were also learning how to adapt our procedures so that the humans could live. By “the Humans,” I mean me and Sean Harris who joined me in 2010. There are physiological and psychological limits to what is sustainable and we were nearing our wits end.… So, in addition to stabilizing the chassis design, we got better at limiting the type of issues that would wake us up in the middle of the night.

E: So you reached some semblance of stability in your prototype and in your business. You’d been sprinting with no pay for a few years to get to this point and then … you decide to give away all your work for free? You open sourced Storage Pod 1.0 on September 9th, 2009. Were you a nervous wreck that someone was going to run away with all your good work?

TN: Not at all. We were dying for press. We were ready to tell the world anything they would listen to. We had no shame. My only regret is that we didn’t do more. We open sourced our design before anyone was doing that, but we didn’t build a community around it or anything.

Remember, we didn’t want to be a manufacturer. We would have killed for someone to build our pods better and cheaper than we could. Our hope from the beginning was always that we would build our own platform until the major vendors did for the server market what they did in the personal computing market. Until Dell would sell me the box that I wanted at the price I could afford, I was going to continue to build my chassis. But I always assumed they would do it faster than a decade.

Supermicro tried to give us a complete chassis at one point, but their problem wasn’t high margin; they were targeting too high of performance. I needed two things: Someone to sell me a box and not make too much profit off of me, and I needed someone who would wrap hard drives in a minimum performance enclosure and not try to make it too redundant or high performance. Put in one RAID controller, not two; daisy chain all the drives; let us suffer a little! I don’t need any of the hardware that can support SSDs. But no matter how much we ask for barebones servers, no one’s been able to build them for us yet.

So we’ve continued to build our own. And the design has iterated and scaled with our business. So we’ll just keep iterating and scaling until someone can make something better than we can.

E: Which is exactly what we’ve done, leading from Storage Pod 1.0 to 2.0, 3.0, 4.0, 4.5, 5.0, to 6.0 (if you want to learn more about these generations, check out our Pod Museum), preparing the way for more than 800 petabytes of data in management.

✣ ✣ ✣

But while Tim is still waiting to pass along the official Podfather baton, he’s not alone. There was the early help from Brian Wilson, Casey Jones, Sean Harris, and a host of others, and then in 2014, Ariel Ellis came aboard to wrangle our supply chain. He grew in that role over time until he took over the responsibility over charting the future of the Pod via Backblaze Labs, becoming the Podson, so to speak. Today, he’s sketching the future of Storage Pod 7.0, and — provided no one builds anything better in the meantime — he’ll tell you all about it on our blog.