Here at Backblaze, we’ve been known to do things a bit differently. From Storage Pods and Backblaze Vaults to drive farming and hard drive stats, we often take a different path. So, it’s no surprise we love stories about people who think outside of the box when presented with a challenge. This is especially true when that story involves building a mongo storage server, a venerable Toyota 4Runner, and a couple of IT engineers hell-bent on getting 1.2 petabytes of their organization’s data off-site. Let’s meet Alex Acosta and Andrew Davis of Gladstone Institutes.

Data on the Run

The security guard at the front desk nodded knowingly as Alex and Andrew rolled the three large Turtle cases through the lobby and out the front door of Gladstone Institutes. Well known and widely respected, the two IT engineers comprised two-thirds of the IT Operations staff at the time and had 25 years of Gladstone experience between them. So as odd as it might seem to have IT personnel leaving a secure facility after-hours with three large cases, everything was on the up-and-up.

It was dusk in mid-February. Alex and Andrew braced for the cold as they stepped out into the nearly empty parking lot toting the precious cargo within those three cases. Andrew’s 4Runner was close, having arrived early that day—the big day, moving day. They gingerly lugged the heavy cases into the 4Runner. Most of the weight was the cases themselves, the rest of one was a 4U storage server, and in the other two, 36 hard drives. An insignificant part of the weight, if any at all, was the reason they were doing all of this—200 terabytes of Gladstone Institutes research data.

They secured the cases, slammed the tailgate shut, climbed into the 4Runner, and put the wheels in motion for the next part of their plan. They eased onto Highway 101 and headed south. Traffic was terrible, even the carpool lane; dinner would be late, like so many dinners before.

Back to the Beginning

There had been many other late nights since they started on this project six months before. The Fireball XXXL project, as Alex and Andrew eventually named it, was driven by their mission to safeguard Gladstone’s biomedical research data from imminent disaster. On an unknown day in mid-summer, Alex and Andrew were in the server room at Gladstone surrounded by over 900 tapes that were posing as a backup system.

Andrew mused, “It could be ransomware, the building catches on fire, somebody accidentally deletes the datasets because of a command-line entry, any number of things could happen that would destroy all this.” Alex, as he waved his hand across the ever expanding tape library, added, “We can’t rely on this anymore. Tapes are cumbersome, messy and they go bad even when you do everything right. We waste so much time just troubleshooting things that in 2020 we shouldn’t be troubleshooting anymore.” They resolved to find a better way to get their data off-site.

Reality Check

Alex and Andrew listed the goals for their project: get the 1.2 petabytes of data currently stored on-site and in their tape library safely off-site, be able to add 10–20 terabytes of new data each day, and be able to delete files as they needed along the way. The fact that practically every byte of data in question represented biomedical disease research—including data with direct applicability to fighting a global pandemic—meant that they needed to accomplish all of the above with minimal downtime and maximum reliability. Oh, and they had to do all of this without increasing their budget. Optimists.

With cloud storage as the most promising option, they first considered building their own private cloud in the distant data center in the desert. They quickly dismissed the idea as the upfront costs were staggering, never mind the ongoing personnel and maintenance costs of managing their distant systems.

They decided the best option was using a cloud storage service and they compared the leading vendors. Alex was familiar with Backblaze, having followed the blog for years, especially the posts on drive stats and Storage Pods. Even better, the Backblaze B2 Cloud Storage service was straight-forward and affordable. Something he couldn’t say about the other leading cloud storage vendors.

The next challenge was bandwidth. You might think having a 5 Gb/s connection would be enough, but they had a research-heavy, data-hungry organization using that connection. They sharpened their bandwidth pencils and, taking into account institutional usage, they calculated they could easily support the 10–20 terabytes per day uploads. Trouble was, getting the existing 1.2 petabytes of data uploaded would be another matter entirely. They contacted their bandwidth provider and were told they could double their current bandwidth to 10 Gb/s for a multi-year agreement at nearly twice the cost and, by the way, it would be several months to a year before they could start work. Ouch.

They turned to Backblaze, who offered their Backblaze Fireball data transfer service which could upload about 70 terabytes per trip. “Even with the Fireball, it will take us 15, maybe 20, round trips,” lamented Andrew during another late night session of watching backup tapes. “I wish they had a bigger box,” said Alex, to which Andrew replied, “Maybe we could build one. ”

The plan was born: build a mongo storage server, load it with data, take it to Backblaze.

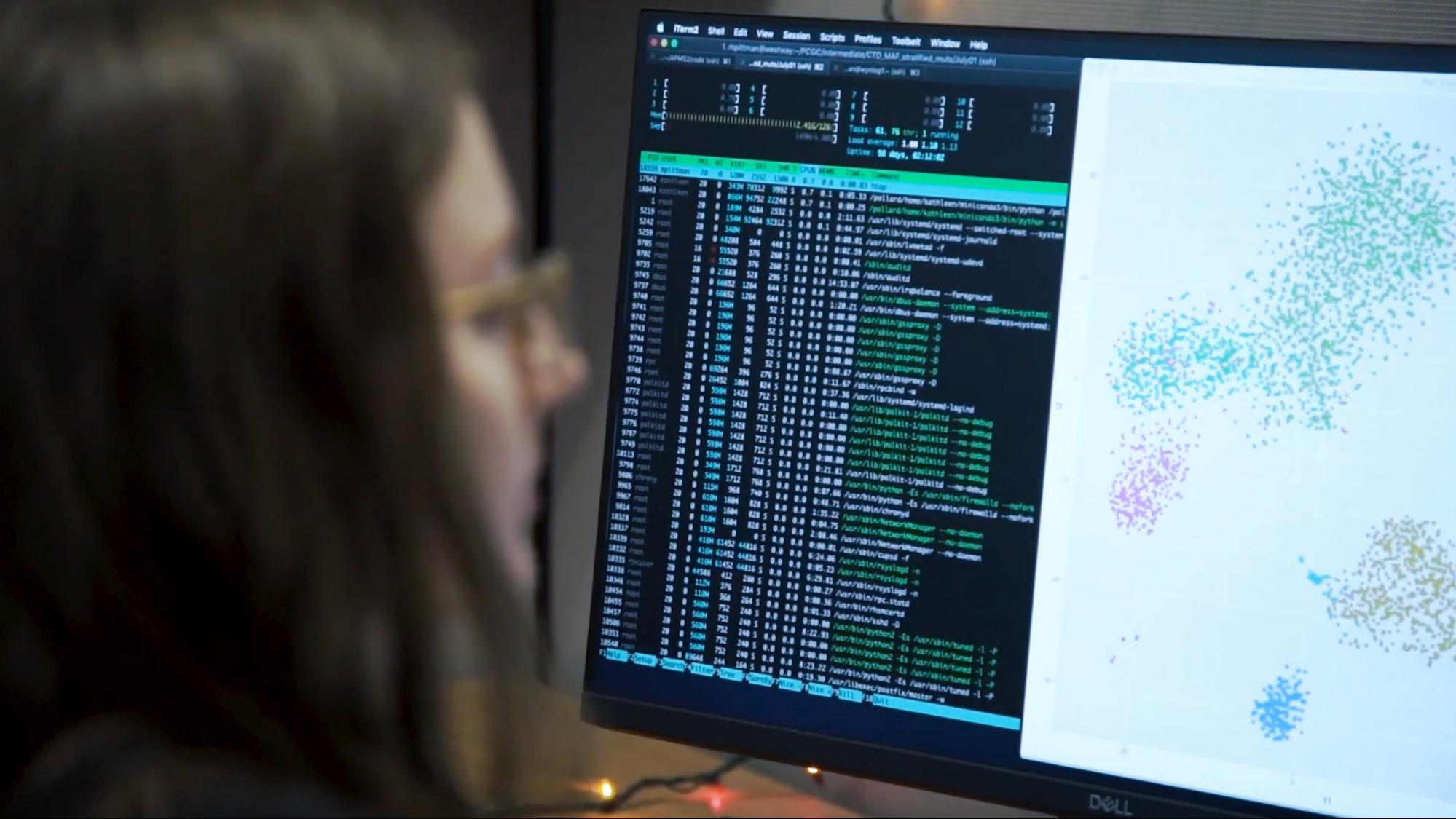

Andrew Davis in Gladstone’s server room.

The Ask

Before they showed up at a Backblaze data center with their creation, they figured they should ask Backblaze first. Alex noted, “With most companies if you say, ‘Hey, I want to build a massive file server, shuttle it into your data center, and plug it in. Don’t you trust me?’ They would say, ‘No,’ and hang up, but Backblaze didn’t, they listened.”

After much consideration, Backblaze agreed to enable Gladstone personnel to enter a nearby data center that was a peering point for the Backblaze network. Thrilled to find kindred spirits, Alex and Andrew now had a partner in the Fireball XXXL project. While this collaboration was a unique opportunity for both parties, for Andrew and Alex it would also mean more late nights and microwaved burritos. That didn’t matter now, they felt like they had a great chance to make their project work.

The Build

Alex and Andrew had squirreled away some budget for a seemingly unrelated project: to build an in-house storage server to serve as a warm backup system for currently active lab projects. That way if anything went wrong in a lab, they could retrieve the last saved version of the data as needed. Using those funds, they realized they could build something to be used as their supersized Fireball XXXL, and then once the data transfer cycles were finished, they could repurpose the system to be the backup server they had budgeted.

Inspired by Backblaze’s open-source Storage Pod, they worked with Backblaze on the specifications for their Fireball XXXL. They went the custom build route starting with a 4U chassis and big drives, and then they added some beefy components of their own.

Fireball XXXL

- Chassis: 4U Supermicro 36-bay, 3.5 in disc chassis, built by iXsystems.

- Processor: Dual CPU Intel Xeon Gold 5217.

- RAM: 4 x 32GB (128GB).

- Data Drives: 36 14TB HE14 from Western Digital.

- ZIL: 120GB NVMe SSD.

- L2ARC: 512GB SSD.

They basically built a 36-bay, 200 terabyte RAID 1+0 system to do the data replication using rclone. Andrew noted, “Rclone is resource-heavy, both on RAM and CPU cycles. When we spec’d the system we needed to make sure we had enough muscle so rclone could push data at 10 Gb/s. It’s not just reading off the drives; it’s the processing required to do that.”

Loading Up

Gladstone runs TrueNAS on their on-premises production systems so it made sense to use it on their newly built data transfer server. “We were able to do a ZFS send from our in-house servers to what looked like a gigantic external hard drive, for lack of a better description,” Andrew said. “It allowed us to replicate at the block level, compressed, so it was much higher performance in copying data over to that system.”

Andrew and Alex had previously determined that they would start with the four datasets that were larger than 40 terabytes each. Each dataset represented years of research from their respective labs, placing them at the top of the off-site backup queue. Over the course of 10 days, they loaded the Fireball XXXL with the data. Once finished, they shut the system down and removed the drives. Opening the foam lined Turtle cases they had previously purchased, they gingerly placed the chassis into one case and the 36 drives in the other two. They secured the covers and headed towards the Gladstone lobby.

At the Data Center

Alex and Andrew eventually arrived at the data center where they’d find the needed Backblaze network peering point. Upon entry, inspections ensued and even though Backblaze had vouched for the Gladstone chaps, the process to enter was arduous. As it should be. Once in their assigned room, they connected a few cables, typed in a few terminal commands and data started uploading to their Backblaze B2 account. The Fireball XXXL performed as expected, with a sustained transfer rate of between eight and 10 Gb/s. It took a little over three days to upload all the data.

They would make another trip a few weeks later and have planned two more. With each trip, more Gladstone data is safely stored off-site.

Gladstone Institutes, with over 40 years of history behind them and more than 450 staff, is a world leader in the biomedical research fields of cardiovascular and neurological diseases, genomic immunology, and virology, with some labs recently shifting their focus to SARS-CoV-2, the virus that causes COVID-19. The researchers at Gladstone rely on their IT team to protect and secure their life-saving research.

When data is literally life-saving, backing up is that much more important.

Epilogue

Before you load up your 200 terabyte media server into the back of your SUV or pickup and head for a Backblaze data center—stop. While we admire the resourcefulness of Andrew and Alex, on our side the process was tough. The security procedures, associated paperwork, and time needed to get our Gladstone heroes access to the data center and our network with their Fireball XXXL were “substantial.” Still, we are glad we did it. We learned a tremendous amount during the process, and maybe we’ll offer our own Fireball XXXL at some point. If we do, we know where to find a couple of guys who know how to design one kick-butt system. Thanks for the ride, gents.