Data Worthy Drives

Backblaze stores over 70 petabytes of data for our customers. That data currently resides on 561 Backblaze Storage Pods containing 25,245 spinning hard drives. Each month we add another 15-20 Storage Pods with each Pod adding another 45 hard drives to our collection. Getting to be a hard drive in a Backblaze Storage Pod is no easy task; there are inspections, formatting, integration testing, load testing, and more. Join us as we follow Stephen through the process of becoming a Backblaze hard drive worthy of our customers’ data.

Who Is Stephen?

Stephen was one of the hundreds of drives that line up every month trying to become Backblaze data storage. Stephen came to Backblaze through our customer drive farming program. We normally don’t name our drives, but Stephen, the hard drive, was sent to us by Stephen, the human, as part of the drive farming program. Stephen actually started out as an external USB hard drive, which meant he had to be “shucked” and the internal drive removed before he started his journey to prove he should join the thousands of other hard working hard drives in Backblaze Storage Pods.

Let’s Get Physical

After his “shucking,” the first step in the process for Stephen wass a physical inspection that looks for any external damage, bent pins, missing screws or rivets, a chipped circuit board, etc. Stephen was a Seagate 4TB drive and after passing his physical inspection, Stephen was grouped with 44 other similar drives. We group drives by manufacturer, model, and type, including matching spindle speeds. The 45 candidate drives were ready to be installed in a Storage Pod. Each Storage Pod is manufactured and assembled in the USA with the internal parts (SATA cards, backplanes, power supplies, etc.) sourced from around the globe. Once a Storage Pod is assembled, the boot drive is formatted and imaged with an operating system and the Backblaze Storage Pod server software.

Getting SMART

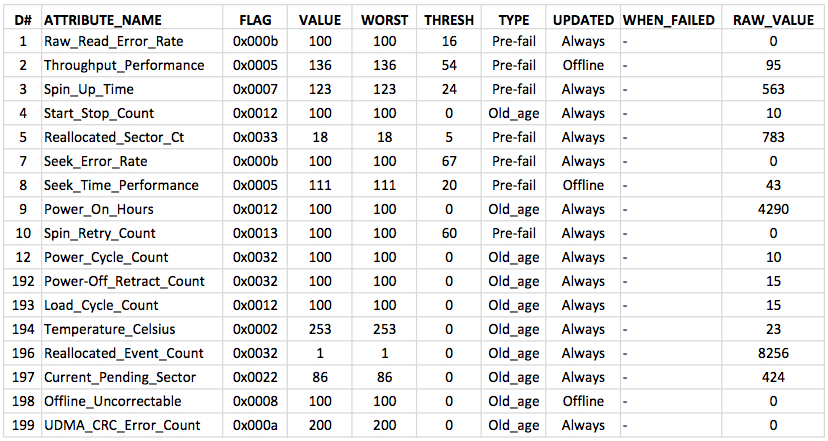

Now that Stephen is in his Storage Pod the first thing we do is to take a SMART snapshot of Stephen and each of his 44 new friends, i.e. the other 4TB drives in the Storage Pod. SMART stands for Self-Monitoring, Analysis, and Reporting Technology and is used to monitor hard drives to detect and report on various indicators of reliability. Each drive manufacturer defines the set of attributes and their associated threshold values for each drive model. The SMART snapshot serves two functions. First, it is used to identify any variances that could indicate real or potential problems with a given drive. Second, it establishes a baseline definition of the drive that we can compare to in the future.

Sample SMART output for a Seagate 4TB drive.

Setting Up and Syncing the RAID Arrays

The first step in setting up the RAID arrays is to strip off any file systems or partitions installed on the drives. New internal drives are almost always clean before we start this process. External drives, such as Stephen, often come preformatted by the manufacturer and need to be cleaned. In either case, we make sure all the drives are clean before we start.

Each Storage Pod of 45 drives is logically divided into three RAID arrays utilizing RAID 6 for redundancy. Given the physical layout of a Storage Pod of three rows of 15 drives each, you might assume each row is a RAID array, but it’s not. In each row, every third drive is a member of the same RAID array. This is done to spread a given array across all nine of the backplanes the drives plug into. It also minimizes the impact on a given array if a backplane fails.

Our next step is the initial burn in of the drives as they are built into RAID arrays. This requires that every block on every drive is read and checked. For a 45 drive Storage Pod this step typically takes anywhere from two to five days depending on the drives being tested. We use the “day-per-terabyte” rule of thumb to estimate the time needed. For example, if a Storage Pod has 4TB drives we expect it to take four days to burn in.

When the burn in process terminates we take another SMART snapshot of each of the drives. To pass the burn in phase, the RAID arrays have to be successfully built and synced, and all of the drives have to pass their SMART review that includes a comparison to the first SMART snapshot taken earlier. If anything has failed along the way, the failed component is identified and replaced and the burn in phase is restarted from the beginning. We start from the beginning because you are never sure what could have been disturbed in fixing the failure and more importantly, spending a few extra hours now to rerun the entire test has proven to save us countless hours later.

Shipping and Racking

Once the burn in phase is done, the Storage Pods are packaged up and shipped to a Backblaze data center. Whenever possible, we utilize a Ford Transit to transport the Storage Pods as the car-like suspension of the Transit makes for a smooth ride. The only trouble is that some data centers only have loading docks that are built to load/unload semi-trucks and Ford Transits are not loading dock height. Since Storage Pods weigh about 150lbs, lifting each of them to loading dock height is real work. When we can’t use a Ford Transit, we prefer air ride suspension trucks versus trucks with just leaf springs. Maybe it’s just the condition of our highways here in the USA, but Storage Pods shipped on trucks with just leaf springs failed the load testing phase (described next) more often than those Storage Pods treated to the air ride experience.

Shock sensors on Storage Pods being readied for transport.

Once a Storage Pod arrives at a data center, it is assigned to a physical location, given an identity, set up with a network address, wired into our monitoring system and scheduled for load testing.

Load Testing

The goal of a load test is to exercise every component in the Storage Pod to prove it can work as a unit. A key part of the process consists of creating directories, filling them with data files, reading and validating the data, and then finally deleting the files. The test data is representative of the data we expect from users in type—documents, pictures, videos, etc., and size—tiny, small, big, and ginormous. Depending on the manufacturer and model of the hard drive used, load testing can take from 12 hours to three days.

One of the observations we’ve seen over the years is that if a Hitachi drive is going to fail during the load test, it will usually fail early and hard—it just dies. On the other hand, if a Seagate drive is going to fail during a load test, it will usually fail later on in the test and often, it fails soft, meaning it continues to operate but one or more of its SMART attributes are out of compliance.

When the load test completes, another SMART snapshot is taken for each drive. The load test is a success when the Storage Pod, as a unit, demonstrates that it can take the loads imposed by the test and also when each individual drive proves it is ready and stable. Proving the drives are ready encompasses reviewing the current SMART snapshot as well as comparing the current and previous SMART snapshots to discover any trending changes even though everything is within current thresholds.

If anything has failed along the way in the load testing, the failed component is identified and replaced and the load test is rerun. If the failed component is a hard drive, it first needs to be resynced into the RAID array before the load test can be rerun.

Production

Each Storage Pod starts as a hunk of red metal and a collection of parts in bins and boxes. After being assembled, we ruthlessly put each Storage Pod through its paces to prove it is ready to be a Backblaze Storage Pod. Once a Storage Pod passes the testing process it is added to our production group and is enabled to accept customer data.

Stephen, We Hardly Knew Ye

Stephen failed during load testing. In typical Seagate fashion, he failed towards the end of the load test. Not typical to Seagate drives, he failed hard and died hard. He was a rebel. He was degaussed and recycled by a local school’s PTA e-cycle program and hopefully lives on as a blender or a beer can. Long live Stephen.