This is part two of a series on the factors that an organization needs to consider when opening a data center and the challenges that must be met in the process.

In Part 1 of this series, we looked at the different types of data centers, the importance of location in planning a data center, data center certification, and the single most expensive factor in running a data center, power.

In Part 2, we continue to look at factors that need to considered both by those interested in a dedicated data center and those seeking to colocate in an existing center.

Power (continued from Part 1)

In part 1, we began our discussion of the power requirements of data centers.

As we discussed, redundancy and failover are primary requirements for data center power. A redundantly designed power supply system is also a necessity for maintenance, as it enables repairs to be performed on one network, for example, without having to turn off servers, databases, or electrical equipment.

Power Path

The common critical components of a data center’s power flow are:

- Utility Supply

- Generators

- Transfer Switches

- Distribution Panels

- Uninterruptible Power Supplies (UPS)

- PDUs

Utility Supply is the power that comes from one or more utility grids. While most of us consider the grid to be our primary power supply (hats off to those of you who manage to live off the grid), politics, economics, and distribution make utility supply power susceptible to outages, which is why data centers must have autonomous power available to maintain availability.

Generators are used to supply power when the utility supply is unavailable. They convert mechanical energy, usually from motors, to electrical energy.

Transfer Switches are used to transfer electric load from one source or electrical device to another, such as from one utility line to another, from a generator to a utility, or between generators. The transfer could be manually activated or automatic to ensure continuous electrical power.

Distribution Panels get the power where it needs to go, taking a power feed and dividing it into separate circuits to supply multiple loads.

A UPS, as we touched on earlier, ensures that continuous power is available even when the main power source isn’t. It often consists of batteries that can come online almost instantaneously when the current power ceases. The power from a UPS does not have to last a long time as it is considered an emergency measure until the main power source can be restored. Another function of the UPS is to filter and stabilize the power from the main power supply.

Data center UPSs

PDU stands for the Power Distribution Unit and is the device that distributes power to the individual pieces of equipment.

Network

After power, the networking connections to the data center are of prime importance. Can the data center obtain and maintain high-speed networking connections to the building? With networking, as with all aspects of a data center, availability is a primary consideration. Data center designers think of all possible ways service can be interrupted or lost, even briefly. Details such as the vulnerabilities in the route the network connections make from the core network (the backhaul) to the center, and where network connections enter and exit a building, must be taken into consideration in network and data center design.

Routers and switches are used to transport traffic between the servers in the data center and the core network. Just as with power, network redundancy is a prime factor in maintaining availability of data center services. Two or more upstream service providers are required to ensure that availability.

How fast a customer can transfer data to a data center is affected by: 1) the speed of the connections the data center has with the outside world, 2) the quality of the connections between the customer and the data center, and 3) the distance of the route from customer to the data center. The longer the length of the route and the greater the number of packets that must be transferred, the more significant a factor will be played by latency in the data transfer. Latency is the delay before a transfer of data begins following an instruction for its transfer. Generally latency, not speed, will be the most significant factor in transferring data to and from a data center. Packets transferred using the TCP/IP protocol suite, which is the conceptual model and set of communications protocols used on the internet and similar computer networks, must be acknowledged when received (ACK’d) and requires a communications roundtrip for each packet. If the data is in larger packets, the number of ACKs required is reduced, so latency will be a smaller factor in the overall network communications speed.

Latency generally will be less significant for data storage transfers than for cloud computing. Optimizations such as multi-threading, which is used in Backblaze’s Cloud Backup service, will generally improve overall transfer throughput if sufficient bandwidth is available.

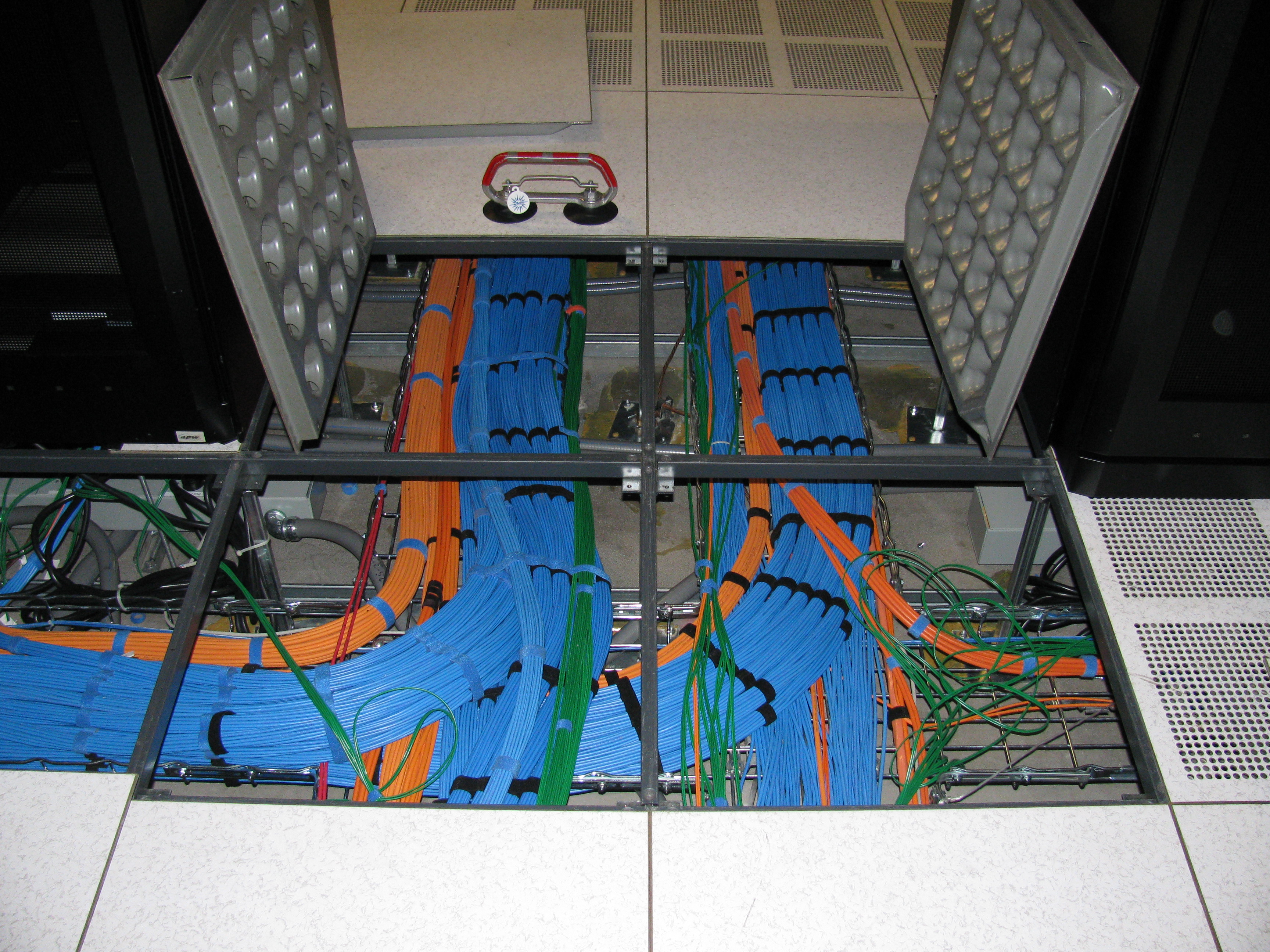

Data center telecommunications equipment. Image originally by Gary Stevens of Hosting Canada. Image from Wikimedia.

Data center under floor cable runs

Cooling

Computer, networking, and power generation equipment generates heat, and there are a number of solutions employed to rid a data center of that heat. The location and climate of the data center is of great importance to the data center designer because the climatic conditions dictate to a large degree what cooling technologies should be deployed that in turn affect the power used and the cost of using that power. The power required and cost needed to manage a data center in a warm, humid climate will vary greatly from managing one in a cool, dry climate. Innovation is strong in this area and many new approaches to efficient and cost-effective cooling are used in the latest data centers.

Switch’s uninterruptible, multi-system, HVAC Data Center Cooling Units

There are three primary ways data center cooling can be achieved:

Room Cooling cools the entire operating area of the data center. This method can be suitable for small data centers, but becomes more difficult and inefficient as IT equipment density and center size increase.

Row Cooling concentrates on cooling a data center on a row by row basis. In its simplest form, hot aisle/cold aisle data center design involves lining up server racks in alternating rows with cold air intakes facing one way and hot air exhausts facing the other. The rows composed of rack fronts are called cold aisles. Typically, cold aisles face air conditioner output ducts. The rows the heated exhausts pour into are called hot aisles. Typically, hot aisles face air conditioner return ducts.

Rack Cooling tackles cooling on a rack by rack basis. Air-conditioning units are dedicated to specific racks. This approach allows for maximum densities to be deployed per rack. This works best in data centers with fully loaded racks, otherwise there would be too much cooling capacity, and the air-conditioning losses alone could exceed the total IT load.

Security

Data Centers are high-security facilities as they house business, government, and other data that contains personal, financial, and other secure information about businesses and individuals.

This list contains the physical-security considerations when opening or co-locating in a data center:

Layered Security Zones. Systems and processes are deployed to allow only authorized personnel in certain areas of the data center. Examples include keycard access, alarm systems, mantraps, secure doors, and staffed checkpoints.

Physical Barriers. Physical barriers, fencing and reinforced walls are used to protect facilities. In a colocation facility, one customers’ racks and servers are often inaccessible to other customers colocating in the same data center.

Backblaze racks secured in the data center

Monitoring Systems. Advanced surveillance technology monitors and records activity on approaching driveways, building entrances, exits, loading areas, and equipment areas. These systems also can be used to monitor and detect fire and water emergencies, providing early detection and notification before significant damage results.

Top-tier providers evaluate their data center security and facilities on an ongoing basis. Technology becomes outdated quickly, so providers must stay-on-top of new approaches and technologies in order to protect valuable IT assets.

To pass into high security areas of a data center requires passing through a security checkpoint where credentials are verified.

Data centers are careful to control access to all critical and sensitive areas of the site

Facilities and Services

As competition increases among data center colocation providers, expect to see more and more value-added services and facilities.These might include conference rooms, offices, and access to office equipment.

Providers also might offer break rooms, kitchen facilities, storage, and secure loading docks and freight elevators.

Moving into A Data Center

Moving into a data center is a major job for any organization. We wrote a post last year, Desert To Data in 7 Days — Our New Phoenix Data Center, about what it was like to move into our new data center in Phoenix, Arizona.

https://www.backblaze.com/blog/data-center-design/

Visiting a Data Center

Our Director of Product Marketing Andy Klein wrote a popular post last year on what it’s like to visit a data center called A Day in the Life of a Data Center.

https://www.backblaze.com/blog/day-life-datacenter-part/

Would you Like to Know More about The Challenges of Opening and Running a Data Center?

That’s it for part 2 of this series. If readers are interested, we could write a post about some of the new technologies and trends affecting data center design and use. Please let us know in the comments.

![]() Here’s a tip on finding all the posts tagged with data center on our blog. Just follow https://www.backblaze.com/blog/tag/data-center/.

Here’s a tip on finding all the posts tagged with data center on our blog. Just follow https://www.backblaze.com/blog/tag/data-center/.