“What is Cloud Storage?” is a series of posts for business leaders and entrepreneurs interested in using the cloud to scale their business without wasting millions of capital on infrastructure. Despite being relatively simple, information about “the Cloud” is overrun with frustratingly unclear jargon. These guides aim to cut through the hype and give you the information you need to convince stakeholders that scaling your business in the cloud is an essential next step. We hope you find them useful, and will let us know what additional insight you might need.” –The Editors

What is Cloud Storage?

“Big Data” is a phrase people love to throw around in advertising and planning documents, despite the fact that the term itself is rarely defined the same way by any two businesses, even among industry leaders. However, everyone can agree about its rapidly growing importance—understanding Big Data and how to leverage it for the greatest value will be of critical organizational concern for the foreseeable future.

So then what does Big Data really mean? Who is it for? Where does it come from? Where is it stored? What makes it so big, anyway? Let’s bring Big Data down to size.

What is Big Data?

First things first, for purposes of this discussion, “Big” means any amount of data that exceeds the storage capacity of a single organization. “Data” refers to information stored or processed on a computer. Collectively, then, “Big Data” is a massive volume of both structured or unstructured (or both) data that is too large to effectively process using traditional relational database management systems or applications. In more general terms, when your infrastructure is too small to handle the data your business is generating—either because the volume of data is too large, it moves too fast, or it simply exceeds the current processing capacity of your systems—you’ve entered the realm of Big Data.

Let’s take a look at the defining characteristics.

Characteristics of Big Data

Current definitions of Big Data often reference a “triple (or in some cases quadruple) V” construct for detailing its characteristics. The “V”s reference velocity, volume, variety, and variability. We’ll define them for you here:

Velocity

Velocity refers to the speed of generation of the data—the pace at which data flows in from sources like business processes, application logs, networks, and social media sites, sensors, mobile devices, etc. This speed determines how rapidly data must be processed to meet business demands, which determines the real potential for the data.

Volume

The term Big Data itself obviously references significant volume. But beyond just being “big,” the relative size of a data set is a fundamental factor in determining its value. The volume of data stored by an organization is used to ascertain its scalability, accessibility, and ease or difficulty of management. A few examples of high volume data sets are all of the credit card transactions in the United States on a given day; the entire collection of medical records in Europe; and every video uploaded to YouTube in an hour. A small to moderate volume might be the total number of credit card transactions in your business.

Variety

Variety refers to how many disparate or separate data sources contribute to an organization’s Big Data, along with the intrinsic nature of the data coming from each source. This relates to both structured and unstructured data. Years ago, spreadsheets and databases were the primary sources of data handled by the majority of applications. Today, data is generated in a multitude of formats such as email, photos, videos, monitoring devices, PDFs, audio, etc.,—all of which demand different considerations in analysis applications. This variety of formats can potentially create issues for storage, mining, and analyzing data.

Variability

This concerns any inconsistencies in the data formats coming from any one source. Where variety considers different inputs from different sources, variability considers different inputs from one data source. These differences can complicate the effective management of the data store. Variability may also refer to differences in the speed of the data flow into your storage systems. Where velocity refers to the speed of all of your data, variability refers to how different data sets might move at different speeds. Variability can be a concern when the data itself has inconsistencies despite the architecture remaining constant.

An example from the health sector would be the variances within influenza epidemics (when and where they happen, how they’re reported in different health systems) and vaccinations (where they are/aren’t available) from year to year.

Understanding the makeup of Big Data in terms of Velocity, Volume, Variety, and Variability is key when strategizing big data solutions. This fundamental terminology will help you to effectively communicate among all players involved in decision making when you bring Big Data solutions to your team or your wider business. Whether pitching solutions, engaging consultants or vendors, or hearing out the proposals of the IT group, a shared terminology is crucial.

What is Big Data Used For?

Businesses use Big Data to try to predict future customer behavior based on past patterns and trends. Effective predictive analytics are the metaphorical crystal ball that organizations seek about what their customers want and when they want it. Theoretically, the more data collected, the more patterns and trends the business can identify. This information can potentially make all the difference for a successful strategy in customer acquisition and retention, and create loyal advocates for a business.

In this case, bigger is definitely better! But, the method an organization chooses to address its Big Data needs will be a pivotal marker for success in the coming years. Choosing your approach begins with understanding the sources of your data.

Sources of Big Data

Today’s world is incontestably digital: an endless array of gadgets and devices function as our trusted allies on a daily basis. While helpful, these constant companions are also responsible for generating more and more data every day. Smartphones, GPS technology, social media, surveillance cameras, machine sensors (and the growing number of users behind them) are all producing reams of data on a moment-to-moment basis that has increased exponentially, from 1 Zetabyte of customer data produced in 2009 to more than 35 Zetabytes in 2020.

If your business uses an app to receive and process orders for customers, or if you log extensive point-of-sale retail data, or if you have massive email marketing campaigns, you could have sources for untapped insight into your customers.

Once you understand the sources of your data, the next step is understanding the methods for housing and managing it. Data Warehouses and Data Lakes are two of the primary types of storage and maintenance systems that you should be familiar with.

Where Is Big Data Stored? Data Warehouses & Data Lakes

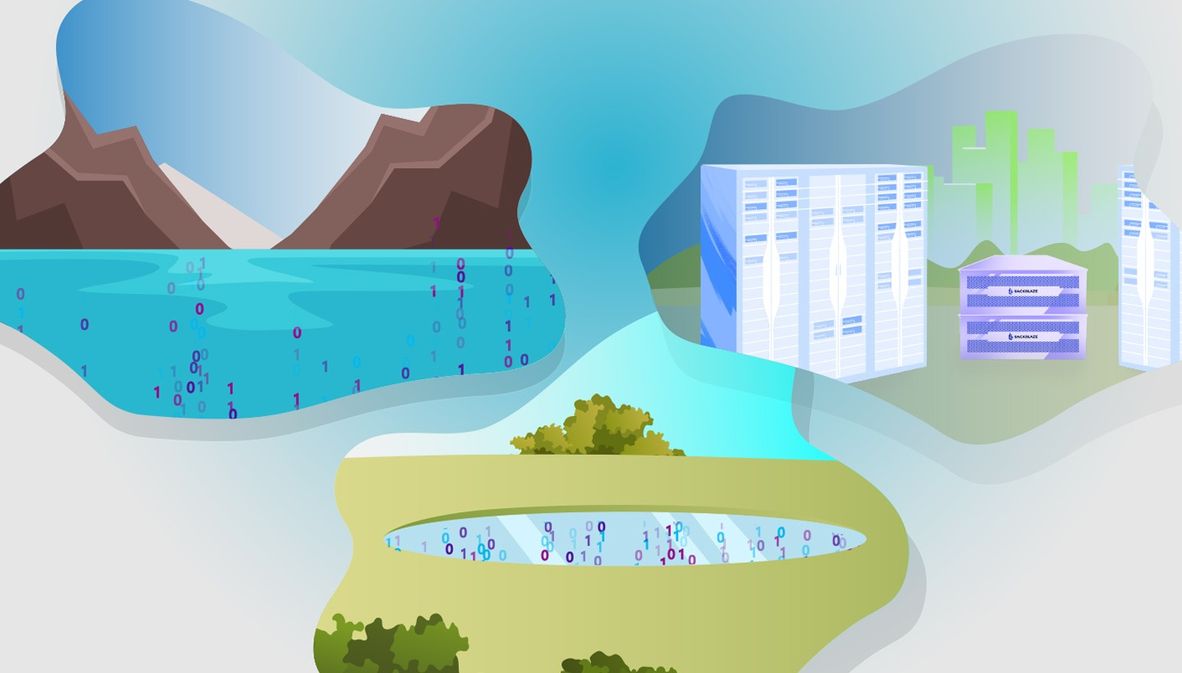

Although both Data Lakes and Data Warehouses are widely used for Big Data storage they are not interchangeable terms.

A Data Warehouse is an electronic system used to organize information. A Data Warehouse goes beyond the capabilities of a traditional relational database’s function of housing and organizing data generated from a single source only.

How Do Data Warehouses Work?

A Data Warehouse is a repository for structured, filtered data that has already been processed for a specific purpose. A warehouse combines information from multiple sources into a single comprehensive database.

For example, in the retail world, a data warehouse may consolidate customer info from point-of-sale systems, the company website, consumer comment cards, and mailing lists. This information can then be used for distribution and marketing purposes, to track inventory movements, customer buying habits, manage promotions, and to determine pricing policies.

Additionally, the Data Warehouse may also incorporate information about company employees such as demographic data, salaries, schedules, and so on. This type of information can be used to inform hiring practices, set Human Resources policies and help guide other internal practices.

Data Warehouses are fundamental in the efficiency of modern life. For instance:

Have a plane to catch?

Airline systems rely on Data Warehouses for many operational functions like route analysis, crew assignments, frequent flyer programs, and more.

Have a headache?

The healthcare sector uses Data Warehouses to aid organizational strategy, help predict patient outcomes, generate treatment reports, and cross-share information with insurance companies, medical aid services, and so forth.

Are you a solid citizen?

In the public sector, Data Warehouses are mainly used for gathering intelligence and assisting government agencies in maintaining and analyzing individual tax and health records.

Playing it safe?

In investment and insurance sectors, the warehouses are mainly used to detect and analyze data patterns reflecting customer trends, and to continuously track market fluctuations.

Have a call to make?

The telecommunications industry makes use of Data Warehouses for management of product promotions, to drive sales strategies, and to make distribution decisions.

Need a room for the night?

The hospitality industry utilizes Data Warehouse capabilities in the tailored design and cost-effective implementation of advertising and marketing programs targeted to reflect client feedback and travel habits.

Data Warehouses are integral in many aspects of the business of everyday life. That said, they aren’t capable of handling the inflow of data in its raw format, like object files or blobs. A Data Lake is the type of repository needed to make use of this raw data. Let’s examine Data Lakes next.

What is a Data Lake?

A Data Lake is a vast pool of raw data, the purpose for which is not yet defined. This data can be both structured and unstructured. The prime attributes of a Data Lake are a secure and adaptable data storage and maintenance system distinguished by its flexibility, agility, and ease of use.

If you’re considering a business approach that involves Data Lakes, you’ll want to look for solutions that have the following characteristics: they should retain all data and support all data types; they should easily adapt to change; and they should provide quick insights to as wide a range of users as you require.

Use Cases for Data Lakes

Data Lakes are most helpful when working with streaming data, like the sorts of information gathered from machine sensors, live event-based data streams, clickstream tracking, or product/server logs.

Deployments of Data Lakes typically address one or more of the following business use cases:

- Business intelligence and analytics – analyzing streams of data to determine high-level trends and granular, record-level insights. A good example of this is the oil and gas industry, which has used the nearly 1.5 Terabytes of data they generate on a daily basis to increase their efficiency.

- Data science – unstructured data allows for more possibilities in analysis and exploration, enabling innovative applications of machine learning, advanced statistics and predictive algorithms. State, city, and federal governments around the world are using data science to dig more deeply into the massive amount of data they collect regarding traffic, utilities, and pedestrian behavior to design safer, smarter cities.

- Data serving – Data Lakes are usually an integral part of high-performance architectures for applications that rely on fresh or real-time data, including recommender systems, predictive decision engines or fraud detection tools. A good example of this use case are the different Customer Data Platforms available that pull information from many behavioral and transactional data sources to highly refine and target marketing to individual customers.

When considered together, the different potential applications for Data Lakes in your business seem to promise an endless source of revolutionary insights. But the ongoing maintenance and technical upgrades required for these data sources to retain relevance and value is massive. If neglected or mismanaged, Data Lakes quickly devolve. As such, one of the biggest considerations to weigh when considering this approach is whether you have the financial and personnel capacity to manage Data Lakes over the long term.

What is a Data Swamp?

A Data Swamp, put simply, is a Data Lake that no one cared to manage appropriately. They arise when a Data Lake is being treated as storage only, with a lack of curation, management, retention and lifecycle policies, and metadata. And if you decided to work Data Lake derived insights into your business planning, and end up with a Swamp, you are going to be sorely disappointed. You’re paying the same amount to store all of your data, but returning zero effective intelligence to your bottom line.

Final Thoughts on Big Data Maintenance

Any business or organization considering entry into Big Data country will want to be very careful and planful as they consider how they will store, maintain, and analyze their data. Making the right choices at the outset will ensure you’re able to traverse the developing digital landscape with strategic insights that enable informed decisions to keep you ahead of your competitors. We hope this primer on Big Data gives you the confidence to take the appropriate first steps.