Availability, time to first byte, throughput, durability—there are plenty of ways to measure “performance” when it comes to cloud storage. But, which measure is best and how should performance factor in when you’re choosing a cloud storage provider? Other than security and cost, performance is arguably the most important decision criteria, but it’s also the hardest dimension to clarify. It can be highly variable and depends on your own infrastructure, your workload, and all the network connections between your infrastructure and the cloud provider as well.

Today, I’m walking through how to think strategically about cloud storage performance, including which metrics matter and which may not be as important for you.

First, What’s Your Use Case?

The first thing to keep in mind is how you’re going to be using cloud storage. After all, performance requirements will vary from one use case to another. For instance, you may need greater performance in terms of latency if you’re using cloud storage to serve up software as a service (SaaS) content; however, if you’re using cloud storage to back up and archive data, throughput is probably more important for your purposes.

For something like application storage, you should also have other tools in your toolbox even when you are using hot, fast, public cloud storage, like the ability to cache content on edge servers, closer to end users, with a content delivery network (CDN).

Ultimately, you need to decide which cloud storage metrics are the most important to your organization. Performance is important, certainly, but security or cost may be weighted more heavily in your decision matrix.

What Is Performant Cloud Storage?

Performance can be described using a number of different criteria, including:

- Latency

- Throughput

- Availability

- Durability

I’ll define each of these and talk a bit about what each means when you’re evaluating a given cloud storage provider and how they may affect upload and download speeds.

Latency

- Latency is defined as the time between a client request and a server response. It quantifies the time it takes data to transfer across a network.

- Latency is primarily influenced by physical distance—the farther away the client is from the server, the longer it takes to complete the request.

- If you’re serving content to many geographically dispersed clients, you can use a CDN to reduce the latency they experience.

Latency can be influenced by network congestion, security protocols on a network, or network infrastructure, but the primary cause is generally distance, as we noted above.

Downstream latency is typically measured using time to first byte (TTFB). In the context of surfing the web, TTFB is the time between a page request and when the browser receives the first byte of information from the server. In other words, TTFB is measured by how long it takes between the start of the request and the start of the response, including DNS lookup and establishing the connection using a TCP handshake and TLS handshake if you’ve made the request over HTTPS.

Let’s say you’re uploading data from California to a cloud storage data center in Sacramento. In that case, you’ll experience lower latency than if your business data is stored in, say, Ohio and has to make the cross-country trip. However, making the “right” decision about where to store your data isn’t quite as simple as that, and the complexity goes back to your use case. If you’re using cloud storage for off-site backup, you may want your data to be stored farther away from your organization to protect against natural disasters. In this case, performance is likely secondary to location—you only need fast enough performance to meet your backup schedule.

Using a CDN to Improve Latency

If you’re using cloud storage to store active data, you can speed up performance by using a CDN. A CDN helps speed content delivery by caching content at the edge, meaning faster load times and reduced latency.

Throughput

- Throughput is a measure of the amount of data passing through a system at a given time.

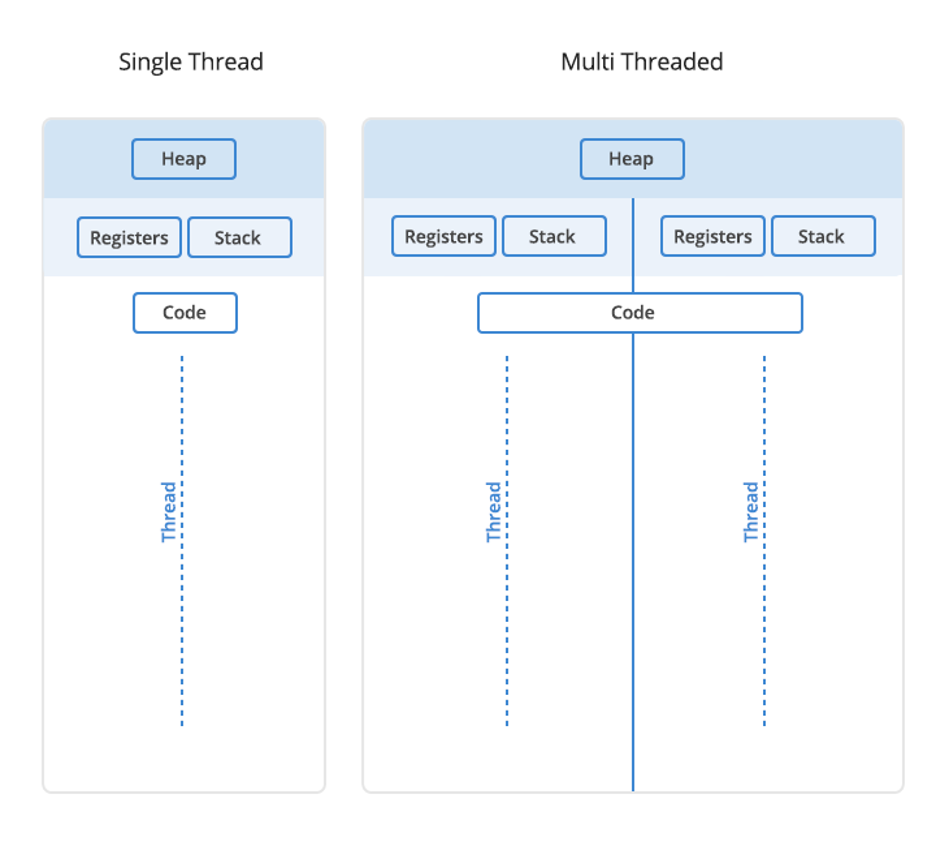

- If you have spare bandwidth, you can use multi-threading to improve throughput.

- Cloud storage providers’ architecture influences throughput, as do their policies around slowdowns (i.e. throttling).

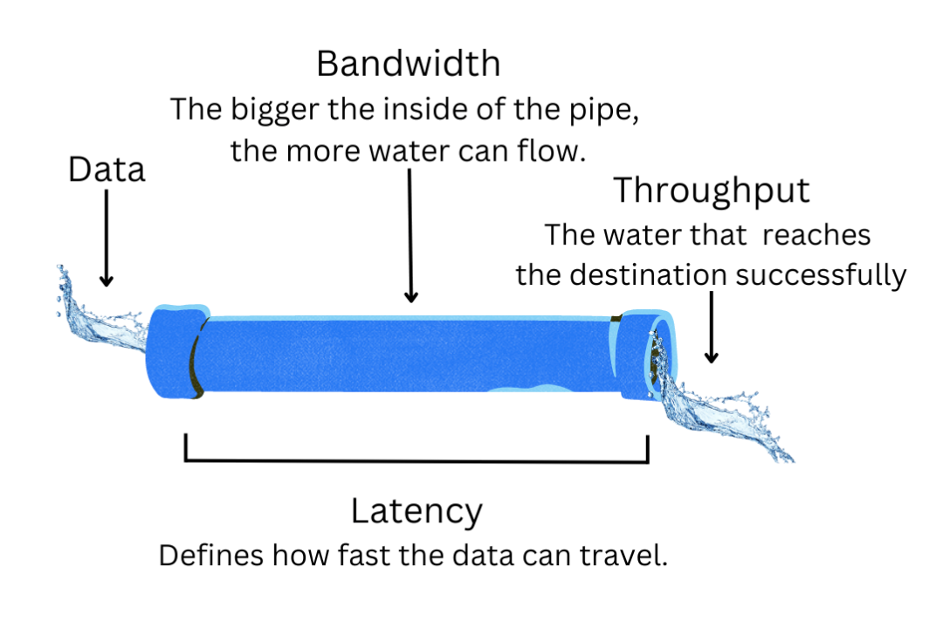

Throughput is often confused with bandwidth. The two concepts are closely related, but different.

To explain them, it’s helpful to use a metaphor: Imagine a swimming pool. The amount of water in it is your file size. When you want to drain the pool, you need a pipe. Bandwidth is the size of the pipe, and throughput is the rate at which water moves through the pipe successfully. So, bandwidth affects your ultimate throughput. Throughput is also influenced by processing power, packet loss, and network topology, but bandwidth is the main factor.

Using Multi-Threading to Improve Throughput

Assuming you have some bandwidth to spare, one of the best ways to improve throughput is to enable multi-threading. Threads are units of execution within processes. When you transmit files using a program across a network, they are being communicated by threads. Using more than one thread (multi-threading) to transmit files is, not surprisingly, better and faster than using just one (although a greater number of threads will require more processing power and memory). To return to our water pipe analogy, multi-threading is like having multiple water pumps (threads) running to that same pipe. Maybe with one pump, you can only fill 10% of your pipe. But you can keep adding pumps until you reach pipe capacity.

When you’re using cloud storage with an integration like backup software or a network attached storage (NAS) device, the multi-threading setting is typically found in the integration’s settings. Many backup tools, like Veeam, are already set to multi-thread by default. Veeam automatically makes adjustments based on details like the number of individual backup jobs, or you can configure the number of threads manually. Other integrations, like Synology’s Cloud Sync, also give you granular control over threading so you can dial in your performance.

That said, the gains from increasing the number of threads are limited by the available bandwidth, processing power, and memory. Finding the right setting can involve some trial and error, but the improvements can be substantial (as we discovered when we compared download speeds on different Python versions using single vs. multi-threading).

What About Throttling?

One question you’ll absolutely want to ask when you’re choosing a cloud storage provider is whether they throttle traffic. That means they deliberately slow down your connection for various reasons. Shameless plug here: Backblaze does not throttle, so customers are able to take advantage of all their bandwidth while uploading to B2 Cloud Storage. Many other public cloud services do throttle, although they certainly may not make it widely known, so be sure to ask the question upfront when engaging with a storage provider.

Upload Speed and Download Speed

Your ultimate upload and download speeds will be affected by throughput and latency. Again, it’s important to consider your use case when determining which performance measure is most important for you. Latency is important to application storage use cases where things like how fast a website loads can make or break a potential SaaS customer. With latency being primarily influenced by distance, it can be further optimized with the help of a CDN. Throughput is often the measurement that’s more important to backup and archive customers because it is indicative of the upload and download speeds an end user will experience, and it can be influenced by cloud storage provider practices, like throttling.

Availability

- Availability is the percentage of time a cloud service or a resource is functioning correctly.

- Make sure the availability listed in the cloud provider’s service level agreement (SLA) matches your needs.

- Keep in mind the difference between hot and cold storage—cold storage services like Amazon Glacier offer slower retrieval and response times.

Also called uptime, this metric measures the percentage of time that a cloud service or resource is available and functioning correctly. It’s usually expressed as a percentage, with 99.9% (three nines) or 99.99% (four nines) availability being common targets for critical services. Availability is often backed by SLAs that define the uptime customers can expect and what happens if availability falls below that metric.

You’ll also want to consider availability if you’re considering whether you want to store in cold storage versus hot storage. Cold storage is lower performing by design. It prioritizes durability and cost-effectiveness over availability. Services like Amazon Glacier and Google Coldline take this approach, offering slower retrieval and response times than their hot storage counterparts. While cost savings is typically a big factor when it comes to considering cold storage, keep in mind that if you do need to retrieve your data, it will take much longer (potentially days instead of seconds), and speeding that up at all is still going to cost you. You may end up paying more to get your data back faster, and you should also be aware of the exorbitant egress fees and minimum storage duration requirements for cold storage—unexpected costs that can easily add up.

| Cold | Hot | |

|---|---|---|

| Access Speed | Slow | Fast |

| Access Frequency | Seldom or Never | Frequent |

| Data Volume | Low | High |

| Storage Media | Slower drives, LTO, offline | Faster drives, durable drives, SSDs |

| Cost | Lower | Higher |

Durability

- Durability is the ability of a storage system to consistently preserve data.

- Durability is measured in “nines” or the probability that your data is retrievable after one year of storage.

- We designed the Backblaze B2 Storage Cloud for 11 nines of durability using erasure coding.

Data durability refers to the ability of a data storage system to reliably and consistently preserve data over time, even in the face of hardware failures, errors, or unforeseen issues. It is a measure of data’s long-term resilience and permanence. Highly durable data storage systems ensure that data remains intact and accessible, meeting reliability and availability expectations, making it a fundamental consideration for critical applications and data management.

We usually measure durability or, more precisely annual durability, in “nines”, referring to the number of nines in the probability (expressed as a percentage) that your data is retrievable after one year of storage. We know from our work on Drive Stats that an annual failure rate of 1% is typical for a hard drive. So, if you were to store your data on a single drive, its durability, the probability that it would not fail, would be 99%, or two nines.

The very simplest way of improving durability is to simply replicate data across multiple drives. If a file is lost, you still have the remaining copies. It’s also simple to calculate the durability with this approach. If you write each file to two drives, you lose data only if both drives fail. We calculate the probability of both drives failing by multiplying the probabilities of either drive failing, 0.01 x 0.01 = 0.0001, giving a durability of 99.99%, or four nines. While simple, this approach is costly—it incurs a 100% overhead in the amount of storage required to deliver four nines of durability.

Erasure coding is a more sophisticated technique, improving durability with much less overhead than simple replication. An erasure code takes a “message,” such as a data file, and makes a longer message in a way that the original can be reconstructed from the longer message even if parts of the longer message have been lost.

The durability calculation for this approach is much more complex than for replication, as it involves the time required to replace and rebuild failed drives as well as the probability that a drive will fail, but we calculated that we could take advantage of erasure coding in designing the Backblaze B2 Storage Cloud for 11 nines of durability with just 25% overhead in the amount of storage required.

How does this work? Briefly, when we store a file, we split it into 16 equal-sized pieces, or shards. We then calculate four more shards, called parity shards, in such a way that the original file can be reconstructed from any 16 of the 20 shards. We then store the resulting 20 shards on 20 different drives, each in a separate Storage Pod (storage server).

If a drive does fail, it can be replaced with a new drive, and its data rebuilt from the remaining good drives. We open sourced our implementation of Reed-Solomon erasure coding, so you can dive into the source code for more details.

Additional Factors Impacting Cloud Storage Performance

In addition to bandwidth and latency, there are a few additional factors that impact cloud storage performance, including:

- The size of your files.

- The number of files you upload or download.

- Block (part) size.

- The amount of available memory on your machine.

Small files—that is, those less than 5GB—can be uploaded in a single API call. Larger files, from 5MB to 10TB, can be uploaded as “parts”, in multiple API calls. You’ll notice that there is quite an overlap here! For uploading files between 5MB and 5GB, is it better to upload them in a single API call, or split them into parts? What is the optimum part size? For backup applications, which typically split all data into equal-sized blocks, storing each block as a file, what is the optimum block size? As with many questions, the answer is that it depends.

Remember latency? Each API call incurs a more-or-less fixed overhead due to latency. For a 1GB file, assuming a single thread of execution, uploading all 1GB in a single API call will be faster than ten API calls each uploading a 100MB part, since those additional nine API calls each incur some latency overhead. So, bigger is better, right?

Not necessarily. Multi-threading, as mentioned above, affords us the opportunity to upload multiple parts simultaneously, which improves performance—but there are trade-offs. Typically, each part must be stored in memory as it is uploaded, so more threads means more memory consumption. If the number of threads multiplied by the part size exceeds available memory, then either the application will fail with an out of memory error, or data will be swapped to disk, reducing performance.

Downloading data offers even more flexibility, since applications can specify any portion of the file to download in each API call. Whether uploading or downloading, there is a maximum number of threads that will drive throughput to consume all of the available bandwidth. Exceeding this maximum will consume more memory, but provide no performance benefit. If you go back to our pipe analogy, you’ll have reached the maximum capacity of the pipe, so adding more pumps won’t make things move faster.

So, what to do to get the best performance possible for your use case? Simple: customize your settings.

Most backup and file transfer tools allow you to configure the number of threads and the amount of data to be transferred per API call, whether that’s block size or part size. If you are writing your own application, you should allow for these parameters to be configured. When it comes to deployment, some experimentation may be required to achieve maximum throughput given available memory.

How to Evaluate Cloud Performance

To sum up, the cloud is increasingly becoming a cornerstone of every company’s tech stack. Gartner predicts that by 2026, 75% of organizations will adopt a digital transformation model predicated on cloud as the fundamental underlying platform. So, cloud storage performance will likely be a consideration for your company in the next few years if it isn’t already.

It’s important to consider that cloud storage performance can be highly subjective and heavily influenced by things like use case considerations (i.e. backup and archive versus application storage, media workflow, or another), end user bandwidth and throughput, file size, block size, etc. Any evaluation of cloud performance should take these factors into account rather than simply relying on metrics in isolation. And, a holistic cloud strategy will likely have multiple operational schemas to optimize resources for different use cases.

Wait, Aren’t You, Backblaze, a Cloud Storage Company?

Why, yes. Thank you for noticing. We ARE a cloud storage company, and we OFTEN get questions about all of the topics above. In fact, that’s why we put this guide together—our customers and prospects are the best sources of content ideas we can think of. Circling back to the beginning, it bears repeating that performance is one factor to consider in addition to security and cost. (And, hey, we would be remiss not to mention that we’re also one-fifth the cost of AWS S3.) Ultimately, whether you choose Backblaze B2 Cloud Storage or not though, we hope the information is useful to you. Let us know if there’s anything we missed.