Solid-state drives (SSDs) continue to become more and more a part of the data storage landscape. And while our SSD 101 series has covered topics like upgrading, troubleshooting, and recycling your SSDs, we’d like to test one of the more popular declarations from SSD proponents: that SSDs fail much less often than our old friend, the hard disk drive (HDD). This statement is generally attributed to SSDs having no moving parts and is supported by vendor proclamations and murky mean time between failure (MTBF) computations. All of that is fine for SSD marketing purposes, but for comparing failure rates, we prefer the Drive Stats way: direct comparison. Let’s get started.

What Does Drive Failure Look Like for SSDs and HDDs?

In our quarterly Drive Stats reports, we define hard drive failure as either reactive, meaning the drive is no longer operational, or proactive, meaning we believe that drive failure is imminent. For hard drives, much of the data we use to determine a proactive failure comes from the SMART stats we monitor that are reported by the drive.

As with HDDs, we also record and monitor SMART stats for SSD drives. Different SSD models report different SMART stats, with some overlap. To date, we record 31 SMART stats attributes related to SSDs. 25 are listed below.

| # | Description | # | Description |

|---|---|---|---|

| 1 | Read Error Rate | 194 | Temperature Celsius |

| 5 | Reallocated Sectors Count | 195 | Hardware ECC Recovered |

| 9 | Power-on Hours | 198 | Uncorrectable Sector Count |

| 12 | Power Cycle Count | 199 | UltraDMA CRC Error Count |

| 13 | Soft Read Error Rate | 201 | Soft Read Error Rate |

| 173 | SSD Wear Leveling Count | 202 | Data Address Mark Errors |

| 174 | Unexpected Power Loss Count | 231 | Life Left |

| 177 | Wear Range Delta | 232 | Endurance Remaining |

| 179 | Used Reserved Block Count Total | 233 | Media Wearout Indicator |

| 180 | Unused Reserved Block Count Total | 235 | Good Block Count |

| 181 | Program Fail Count Total | 241 | Total LBAs Written |

| 182 | Erase Fail Count | 242 | Total LBAs Read |

| 192 | Unsafe Shutdown Count |

For the remaining six (16, 17, 168, 170, 218, and 245), we are unable to find their definitions. Please reach out in the comments if you can shed any light on the missing attributes.

All that said, we are just at the beginning of using SMART stats to proactively fail a SSD. Many of the attributes cited are drive model or vendor dependent. In addition, as you’ll see, there are a limited number of SSD failures. This limits the amount of data we have for research. As we add and monitor more SSDs to our farm, we intend on building out our rules for proactive SSD drive failure. In the meantime, all of the SSDs which have failed to date are reactive failures, that is: They just stopped working.

Comparing Apples to Apples

In the Backblaze data centers, we use both SSDs and HDDs as boot drives in our storage servers. In our case, describing these drives as boot drives is a misnomer as boot drives are also used to store log files for system access, diagnostics, and more. In other words, these boot drives are regularly reading, writing, and deleting files in addition to their named function of booting a server at startup.

In our first storage servers, we used hard drives as boot drives as they were inexpensive and served the purpose. This continued until mid-2018 when we were able to buy 200GB SSDs for about $50, which was our top-end price point for boot drives for each storage server. It was an experiment, but things worked out so well that beginning in mid-2018 we switched to only using SSDs in new storage servers and replaced failed HDD boot drives with SSDs as well.

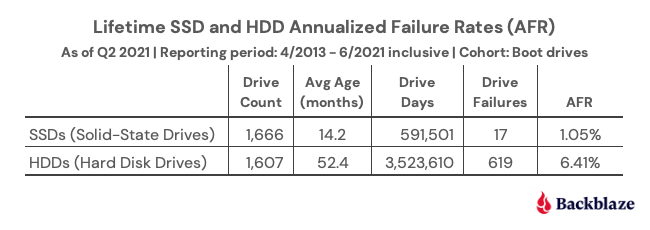

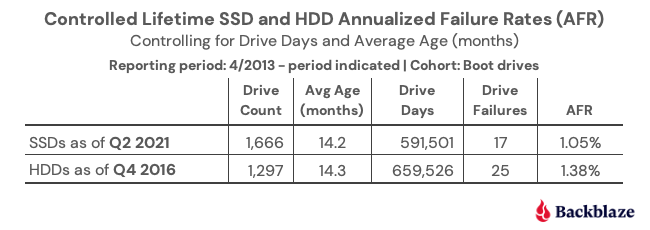

What we have are two groups of drives, SSDs and HDDs, which perform the same functions, have the same workload, and operate in the same environment over time. So naturally, we decided to compare the failure rates of the SSD and HDD boot drives. Below are the lifetime failure rates for each cohort as of Q2 2021.

SSDs Win… Wait, Not So Fast!

It’s over, SSDs win. It’s time to turn your hard drives into bookends and doorstops and buy SSDs. Although, before you start playing dominoes with your hard drives, there are a couple of things to consider which go beyond the face value of the table above: average age and drive days.

- The average age of the SSD drives is 14.2 months, and the average age of the HDD drives is 52.4 months.

- The oldest SSD drives are about 33 months old and the youngest HDD drives are 27 months old.

Basically, the timelines for the average age of the SSDs and HDDs don’t overlap very much. The HDDs are, on average, more than three years older than the SSDs. This places each cohort at very different points in their lifecycle. If you subscribe to the idea that drives fail more often as they get older, you might want to delay your HDD shredding party for just a bit.

By the way, we’ll be publishing a post in a couple of weeks on how well drive failure rates fit the bathtub curve; SPOILER ALERT: old drives fail a lot.

The other factor we listed was drive days, the number of days all the drives in each cohort have been in operation without failing. The wide disparity in drive days causes a big difference in the confidence intervals of the two cohorts as the number of observations (i.e. drive days) varies significantly.

To create a more accurate comparison, we can attempt to control for the average age and drive days in our analysis. To do this, we can take the HDD cohort back in time in our records to see where the average age and drive days are similar to those of the SDDs from Q2 2021. That would allow us to compare each cohort at the same time in their life cycles.

Turning back the clock on the HDDs, we find that using the HDD data from Q4 2016, we were able to create the following comparison.

Suddenly, the annualized failure rate (AFR) difference between SSDs and HDDs is not so massive. In fact, each drive type is within the other’s 95% confidence interval window. That window is fairly wide (plus or minus 0.5%) because of the relatively small number of drive days.

Where does that leave us? We have some evidence that when both types of drives are young (14 months on average in this case), the SSDs fail less often, but not by much. But you don’t buy a drive to last 14 months, you want it to last years. What do we know about that?

Failure Rates Over Time

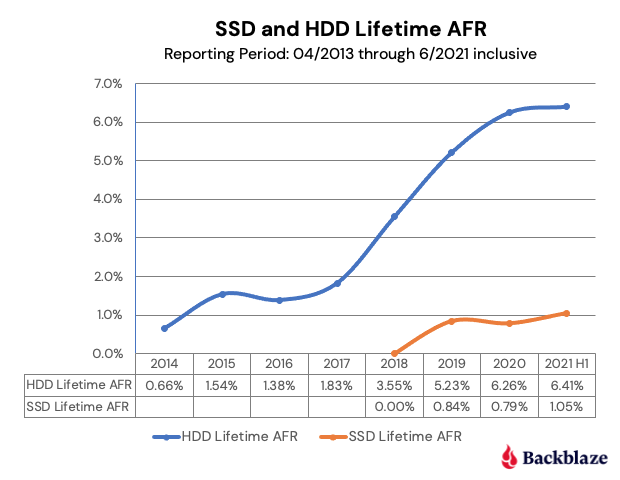

We have data for HDD boot drives that go back to 2013 and for SSD boot drives going back to 2018. The chart below is the lifetime AFR for each drive type through Q2 2021.

As the graph shows, beginning in 2018, the HDD boot drive failure rate accelerated. This continued in 2019 and 2020 before leveling off in 2021 (so far). To state the obvious, as the age of the HDD boot drive fleet increased, so did the failure rate.

One point of interest is the similarity in the two curves through their first four data points. For the HDD cohort, year five (2018) was where the failure rate acceleration began. Is the same fate awaiting our SSDs as they age? While we can expect some increase in the AFR as the SSD age, will it be as dramatic as the HDD line?

Decision Time: SSD or HDD

Where does that leave us in choosing between buying a SSD or a HDD? Given what we know to date, using the failure rate as a factor in your decision is questionable. Once we controlled for age and drive days, the two drive types were similar and the difference was certainly not enough by itself to justify the extra cost of purchasing a SSD versus a HDD. At this point, you are better off deciding based on other factors: cost, speed required, electricity, form factor requirements, and so on.

Over the next couple of years, as we get a better idea of SSD failure rates, we will be able to decide whether or not to add the AFR to the SSD versus HDD buying guide checklist. Until then, we look forward to continued debate.